You know what it’s like, you need to fail your AG over but is it safe to fail over?

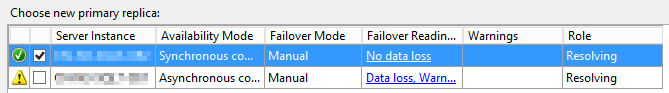

Perhaps you’ve clicked on ‘failover’ for the AG and there’s a little green tick and no data loss reported…

Or maybe you’ve checked out sys.dm_hadr_database_replica_cluster_states and ‘is_failover_ready’ is reporting a 1.

So, you’re cool to failover, right? But hold those horses one cotton picking minute…

You’ve failed over but now you’re getting a ton of odd error messages or maybe things aren’t quite working quite as you or your users might expect.

But SQL Said The AG Was Failover Ready!

What SQL was really telling you was there there was no risk of data loss in the event of a failover but was it ‘really’ failover ready? Are there other things that you need to think about?

Agent Jobs

What about your SQL Agent jobs? Have you got jobs that perform actions on your data? If you have, do those jobs exist on the new primary? If they don’t then I’m happy to bet that whatever function that they were playing probably isn’t happening anymore.

One thing that I always want to make sure before I failover is, do I have all the relevant jobs ready to roll on the secondary server?

But what about the jobs that you’ve got on the old primary? There’s a fair chance that, if they’re doing any sort of data manipulation, they’re going to be failing.

So not only do you want to make sure all your jobs exist on the secondary nodes, there’s also some intelligence built in to check that they can run on the node in its current state (either primary or secondary).

I’ve built a little system for managing this for me automatically, I may have to blog about it at some point in the future.

Missing Logins

Another big thing that can trip you up is logins.

Firstly, does the login actually exist on the secondary server? If it’s not there then you can bet your bottom dollar that your users or applications are going to suddenly have issues when they try to access your databases.

Mismatched Passwords

So your login exists but your users are still having trouble! Does the password on the secondary match the password on the primary? I’ve seen plenty of times where the diligent DBA has created the login on all the nodes but for some reason, given them all a different password.

That’s not going to be helpful for your applications.

Mismatched SIDs

Finally, one more thing about logins that may trip you over. That’s the SID.

When you create a login, it’s allocated a unique SID. This SID is used to map the server login to a database user.

Why can this be a problem?

If you just go around creating the logins on each of your nodes, you’ll find that each of your logins will have a different SID. The problem here is that when it comes to failover, your database users will end up being orphaned from the login.

This can be fixed by running sp_change_users_login, I’ve known people to run this every minute via an Agent Job to automatically fix the issue in the event of a failover.

But why do that? Why not just make sure that the SIDs match in the first place? Instead of creating the login on each node, you can use sp_help_revlogin, this will script out the login, including the password and SID and will help you avoid the issues above.

Connection Strings

Another tripping point is your connection strings. Are these pointing to the AG listener or are they pointing at the name of the SQL Server?

If it’s the later, when you failover they’ll, at best be hitting a read-only copy of the database or, if the what was primary is now set to a non-readable secondary won’t be able to access anything at all.

But What Can I Do About It?

Before You Failover…

Missing and Mismatched Logins

It really is well worth your time making sure that your availability groups really are in a state that a failover isn’t going to result in a bunch of support calls landing on your desk.

There are a few things that I make sure that I’ve got in place as standard so that I know that I’m always in a good place to failover.

Firstly I want to make sure that there are no missing logins across my nodes and those that are there have a matching password and SID. This is made very simple with our own Undercover Catalogue, as I explained in this post (note, version 0.2 is now released and does contain the availability group information).

An alert on any mismatch in logins will also be part of the soon to be released v1.4 of our Undercover Inspector.

Missing Agent Jobs

The Undercover Catalogue also holds information on Agent Jobs, so another script that I regularly run will use that to find any jobs that are missing on any of the nodes as well as checking that the schedules match. That’ll get published soon as well

After You’ve Failed Over…

After a failover, even with all my pre-process checks in place I still like to make sure that there aren’t any tell-tale signs that we’ve got an issue. These are failed logins and failed jobs on both the SQL Server that we’ve failed over from and to.

To help me with this, I use a couple of procs that Adrian wrote, sp_FailedLogins and sp_FailedJobs

Hopefully you’ve found this post useful and it’ll help you make your failovers as painless as possible.